Your cluster management platform should support your choice of hardware

A cluster built today will run workloads for four, five, maybe seven years. The management platform shapes which hardware you can deploy, which schedulers you can run, and how painful it is to adapt when requirements change. If your provisioning system is tied to a specific hardware vendor, every procurement negotiation starts with a constraint you didn't choose.

Hardware competition only helps if your platform keeps up

HPC teams now have competitive options across every major component:

| Component | Viable options |

|---|---|

| CPU | Intel Xeon, AMD EPYC, ARM-based (Ampere, AWS Graviton) |

| GPU | NVIDIA A100/H100/H200, AMD Instinct MI300X, Intel Data Center GPU Max |

| Interconnect | InfiniBand, Omni-Path, high-speed Ethernet with RoCE, HPE Slingshot |

This competition drives pricing pressure and innovation, but does the cluster management platform support all of these options equally?

This market is consolidating. NVIDIA acquired Bright Computing, a popular cluster management provider, in 2022. Consolidation is natural in maturing markets, but it makes platform flexibility even more important for organizations that want to evaluate hardware from multiple vendors over a cluster's lifetime.

Platform lock-in inflates every hardware quote

HPC procurement works best when you can credibly evaluate multiple vendors. Platform dependency weakens that position in two ways:

First, switching costs inflate comparisons. If moving from one GPU vendor to another means rearchitecting your provisioning workflow, every competing quote carries a hidden surcharge. Vendors know this.

Second, information asymmetry. The platform vendor can see your deployment scale, renewal timeline, and support tickets. They price accordingly, and that may limit visibility into how that impacts your quote.

Organizations with vendor-neutral cluster management retain the ability to evaluate any hardware that fits their technical requirements. They can credibly switch if the economics justify it. This is the design principle behind Warewulf Pro: it supports any x86_64 or ARM64 hardware capable of PXE boot, so procurement decisions stay with the people buying the hardware.

Planning a hardware refresh? See how Warewulf Pro handles stateless provisioning across mixed-vendor environments, or explore RLC+ for free GPU driver integration out of the box.

OpenHPC standardization makes skills and configurations portable

Warewulf has been OpenHPC's provisioner since the project's 1.0 release in November 2015. That matters for three reasons:

Skills transfer. When you hire an administrator with OpenHPC experience, they can be productive on day one, whether they built that experience at a university, a national lab, or a commercial HPC center. That's a wider talent pool than any proprietary platform can offer.

Community troubleshooting. Problems someone else solved get documented and shared. You're not limited to vendor support channels and their response times.

Governance protects against roadmap changes. No single company can deprecate features or shift priorities to serve a different market. The project's direction responds to community needs.

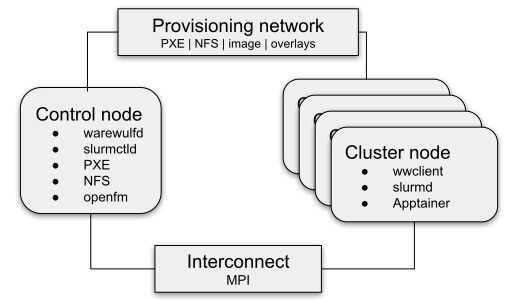

Warewulf Pro builds on this foundation with turn-key node images for OpenHPC clusters. Both Slurm and OpenPBS schedulers are supported, with Apptainer pre-installed and ready for containerized workloads from first boot.

The OS layer reinforces the same principle. RLC+, CIQ's free Rocky Linux distribution, ships with pre-integrated GPU drivers so GPU support works from first boot without manual driver installation. For teams running GPU-accelerated AI workloads in production, RLC Pro AI adds a pre-validated NVIDIA CUDA stack, kernel-level optimizations for inference, and commercial support. Neither constrains which hardware you deploy.

Five questions that reveal actual platform flexibility

Before committing to any cluster management platform, these questions separate real vendor independence from marketing claims:

-

What hardware does the platform actually support? Ask for examples of customers running mixed-vendor GPU environments. Warewulf Pro answer: any PXE-capable x86_64 or ARM64 hardware, no certified hardware lists required.

-

Who controls the roadmap? If one company controls feature priorities, understand their incentives. Warewulf's roadmap is shaped by OpenHPC community governance.

-

What's the exit path? Warewulf Pro's configurations and images use OpenHPC-standard layouts. The underlying platform is open source; you retain the option to operate independently.

-

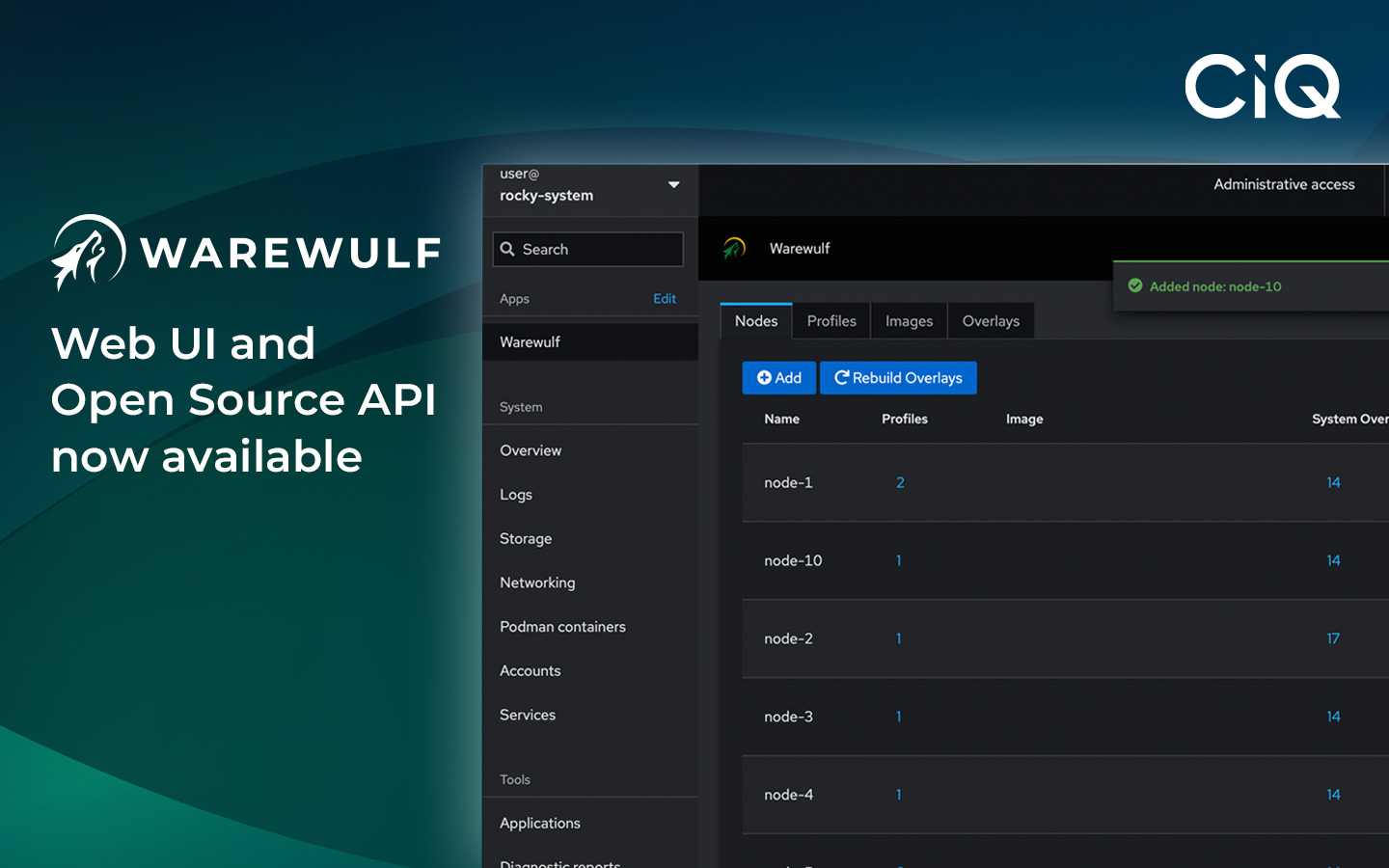

Do contributions flow upstream? CIQ contributes features back to community Warewulf. The REST API underlying Warewulf Pro, for example, was contributed upstream in v4.6.1.

-

What's the five-year total cost of ownership? Include licensing, training, and the opportunity cost of hardware constraints. Warewulf Pro offers both per-node subscriptions and Enterprise License Agreements.

The tradeoff is real

Platforms tied to a hardware vendor can offer tighter integration and faster feature delivery for their specific hardware. That has value for organizations committed to a single vendor's product line with no plans to diversify.

For teams running diverse workloads, planning hardware refreshes across vendors, or serving researchers with varied requirements, vendor independence matters more. Most HPC organizations fall into this category.

Built for scale. Chosen by the world’s best.

2.75M+

Rocky Linux instances

Being used world wide

90%

Of fortune 100 companies

Use CIQ supported technologies

250k

Avg. monthly downloads

Rocky Linux