Container workflows in HPC: Integrating Apptainer with cluster management

Containers have changed how researchers package and share computational workflows. But integrating container runtimes with cluster infrastructure isn't automatic: it requires coordination between provisioning, job scheduling, and storage. Here's how modern cluster management handles container workflows.

Containers in HPC context

Why Docker doesn't fit HPC security models

Docker transformed software deployment in web and cloud environments, but it was never designed for the shared, multi-user reality of HPC clusters. The core problem is privilege: Docker requires a persistent, root-owned daemon to manage containers, and users with Docker access effectively gain root access to the host system. On a shared supercomputer serving hundreds of researchers simultaneously, that is an unacceptable security posture.

HPC clusters operate on a principle of least privilege. Users run jobs as themselves, cannot escalate permissions, and cannot interact with root-owned system daemons on compute nodes. Docker's architecture fundamentally conflicts with this model, which is why most HPC centers have never deployed it on production systems.

Apptainer's design for multi-user environments

Apptainer (formerly Singularity, adopted by the Linux Foundation in 2021) was built from the ground up to solve exactly this problem. Its architecture flips Docker's security model: containers run as the user who launches them, with no elevated privileges inside or outside the container. There is no persistent daemon; Apptainer executes a container image as a process, the same way the OS runs any other binary.

This design has two major implications. First, Apptainer can be safely installed on shared HPC systems and made available to all users without creating a privilege escalation vector. Second, because containers run as ordinary user processes, they integrate naturally with workload managers like Slurm — the scheduler sees an Apptainer container exactly the same way it sees any other job.

Apptainer's container format, SIF (Singularity Image Format), reinforces this philosophy. A SIF file is a single, immutable, compressed file containing the entire container environment: operating system, libraries, application code, and metadata. SIF files can be cryptographically signed and optionally encrypted, making it easy to verify image integrity without an external registry. From the filesystem's perspective, a SIF image is just a file, which means it can be owned, assigned permissions, and distributed using standard Unix tools.

Container use cases in research computing

Containers have become indispensable across a wide range of HPC workloads. Bioinformatics pipelines—with their notoriously complex dependency chains—are among the most common use cases; tools like GATK, BWA, and Nextflow workflows now routinely ship as container images. Machine learning researchers use containers to freeze CUDA, cuDNN, and framework versions for reproducible training runs. Legacy scientific codes that depend on older library versions can be containerized and run on modern OS distributions without recompilation.

At the National Institutes of Health, the Biowulf HPC cluster has used Apptainer as its preferred software installation method since 2020, managing hundreds of scientific applications as container images. The method keeps the container layer completely invisible to end users;researchers simply run commands as if the software were installed natively.

Integration architecture

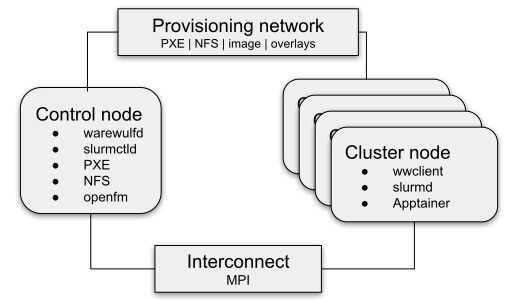

Container runtime on compute nodes

Deploying Apptainer on a large HPC cluster requires deliberate planning. Compute nodes in a modern cluster are typically stateless: they boot from a network-provisioned OS image and carry no persistent local state between jobs. This means Apptainer must either be included in the node image itself or installed on a network file system that is mounted at runtime.

In either scenario, every compute node in the cluster has identical, consistent container support from first boot. This eliminates a whole class of "works on some nodes, not others" debugging issues and ensures that container-dependent jobs can be scheduled to any available node without restriction.

Image storage and distribution

Where container images live is a critical architectural decision. Apptainer SIF files range from a few hundred megabytes to several gigabytes, and on a busy cluster many jobs may need to access the same image simultaneously. Three common strategies exist:

- Shared parallel filesystem: Storing SIF files on a shared filesystem (Lustre, GPFS, or BeeGFS) makes images universally accessible to all nodes with no distribution overhead. Parallel filesystems are optimized for large-file access, and SIF files—being single large files rather than thousands of small ones—frequently perform better than traditional software installations in this environment.

- Per-node caching: For clusters with local NVMe or SSD storage on compute nodes, images can be cached locally on first use. Subsequent jobs reuse the cached copy, reducing filesystem load at scale.

- Container registries: Apptainer can pull images directly from OCI-compatible registries (Docker Hub, GitHub Container Registry, Sylabs Cloud Library) at job runtime. Convenient for development, but introduces network latency and registry availability as dependencies for production jobs.

User namespace and permission handling

Apptainer uses Linux user namespaces to run containers without root privilege. Inside a container, a user can appear to be root—useful during image build steps—but that mapping is confined to the container; on the host, the process still runs as the unprivileged user. A researcher can build a container on their laptop with root-like access inside the container, then run that same image on a shared cluster node with no privilege changes.

Bind mounts—the mechanism by which host directories become accessible inside the container—can be configured by cluster administrators in Apptainer's global configuration files. Home directories, scratch space, and project storage are typically bind-mounted automatically, so users access their data from inside containers exactly as they would from the host. Users can also specify specific directories to bind mount into the container at runtime with CLI flags or environment variables.

Cluster management integration points

Pre-installing Apptainer in node images

The cleanest way to deploy Apptainer across a cluster is to include it in the provisioned node image. When Apptainer is built into the image, administrators gain centralized version control: upgrading Apptainer cluster-wide means updating the node image and reprovisioning nodes, not logging into each node individually. Site-specific settings—default bind paths, GPU support flags, allowed network namespaces—are baked into the image and apply uniformly to every node from the first boot.

Overlay configuration for container support

Apptainer supports writable overlay filesystems that allow temporary modifications to otherwise immutable SIF containers at runtime. This is useful when an application needs to write to locations inside the container (log files, temporary caches) without modifying the underlying image. Administrators can configure persistent overlay directories or tmpfs-backed overlays depending on the workload requirements.

Shared storage for container images

A practical organization strategy, used at NIH Biowulf, places container images under a versioned directory hierarchy that mirrors traditional HPC module installations:

/software/apps/

└── bwa/

└── 0.7.17/

├── bin/

│ └── bwa -> ../libexec/wrapper.sh

├── libexec/

│ ├── app.sif

│ └── wrapper.sh

└── src/

└── app.def

When a user loads the bwa module via Lmod, the bin/ directory is added to their PATH. Running bwa silently invokes the wrapper script, which translates the call into an apptainer exec directive against the SIF file. The container is completely invisible to the user.

Job scheduler integration

Slurm and Apptainer workflow

Slurm is the dominant workload manager in modern HPC, and Apptainer integrates with it without plugins or special configuration. Because containers run as ordinary user processes with no daemon involvement, Slurm tracks and manages them like any other job. A basic submission script for a containerized application looks nearly identical to one running a native binary:

#!/bin/bash

#SBATCH --job-name=my_analysis

#SBATCH --ntasks=1

#SBATCH --cpus-per-task=8

#SBATCH --mem=32G

#SBATCH --time=04:00:00

apptainer exec /shared/images/myapp_v2.sif myapp --input data.csv --output results/

The container inherits the job's resource allocation, environment variables, and working directory automatically.

Resource allocation for containerized jobs

Multi-node MPI jobs require additional coordination. Apptainer supports MPI through a hybrid model: the MPI library must be present both inside the container and on the host, with ABI-compatible versions. The host's mpirun or Slurm's srun launches the job across nodes, and each rank starts its own Apptainer container instance. Because there is no central daemon, this model scales naturally — there's no bottleneck serializing container launches across hundreds of nodes simultaneously.

When the batch scheduling system includes support for PMI/PMIx, it can be used to start containerized MPI jobs without the requirement of an MPI implementation on the host. See our previous blogpost for a detailed description of this approach.

GPU passthrough in containers

GPU support in Apptainer is remarkably straightforward. Adding the --nv flag to any apptainer exec or apptainer run command passes through all NVIDIA GPUs allocated to the job:

#!/bin/bash

#SBATCH --gres=gpu:2

apptainer exec --nv /shared/images/pytorch_cuda.sif python train.py

Devices visible via nvidia-smi on the host are visible inside the container. The container uses the host's NVIDIA drivers alongside its own CUDA libraries, meaning a single image can run on clusters with different driver versions as long as CUDA compatibility is maintained.

Practical implementation

Deploying a transparent containerized workflow

The transparent installation method developed at NIH Biowulf is arguably the most operationally elegant approach for deploying containerized software on shared HPC clusters. The centerpiece is a small wrapper script that intercepts command calls and converts them into apptainer exec directives:

#!/bin/bash

export APPTAINER_BINDPATH=""

cmd=$(basename "$0")

dir="$(dirname $(readlink -f ${BASH_SOURCE[0]}))"

img="app.sif"

apptainer exec "${dir}/${img}" $cmd "$@"

Symbolic links named after each exposed command point to this script. When a user runs bwa mem ref.fa reads.fq, they are actually running apptainer exec bwa.sif bwa mem ref.fa reads.fq — but they never see the container layer. Deploying a new software version is as simple as dropping a new SIF file into the directory structure and updating the symlinks.

Image caching strategies

For workflows that pull images from public registries, Apptainer's local cache (~/.apptainer/cache by default) prevents redundant downloads. On clusters with shared storage, administrators can redirect the cache to a shared path, allowing a single pull to serve the entire user community. Prepopulating the cache with commonly used images during node provisioning further reduces job startup latency.

Troubleshooting common issues

The most frequent issues with Apptainer in HPC environments fall into a few categories: missing bind mounts (a required host path isn't visible inside the container), MPI version mismatches (host and container MPI libraries are incompatible), and overlay permission errors (the overlay directory isn't writable by the job user). Apptainer's --debug flag produces verbose output that typically pinpoints the problem quickly. For MPI issues, checking PMI/PMIx compatibility between the host's process manager and the container's MPI build resolves the majority of cases.

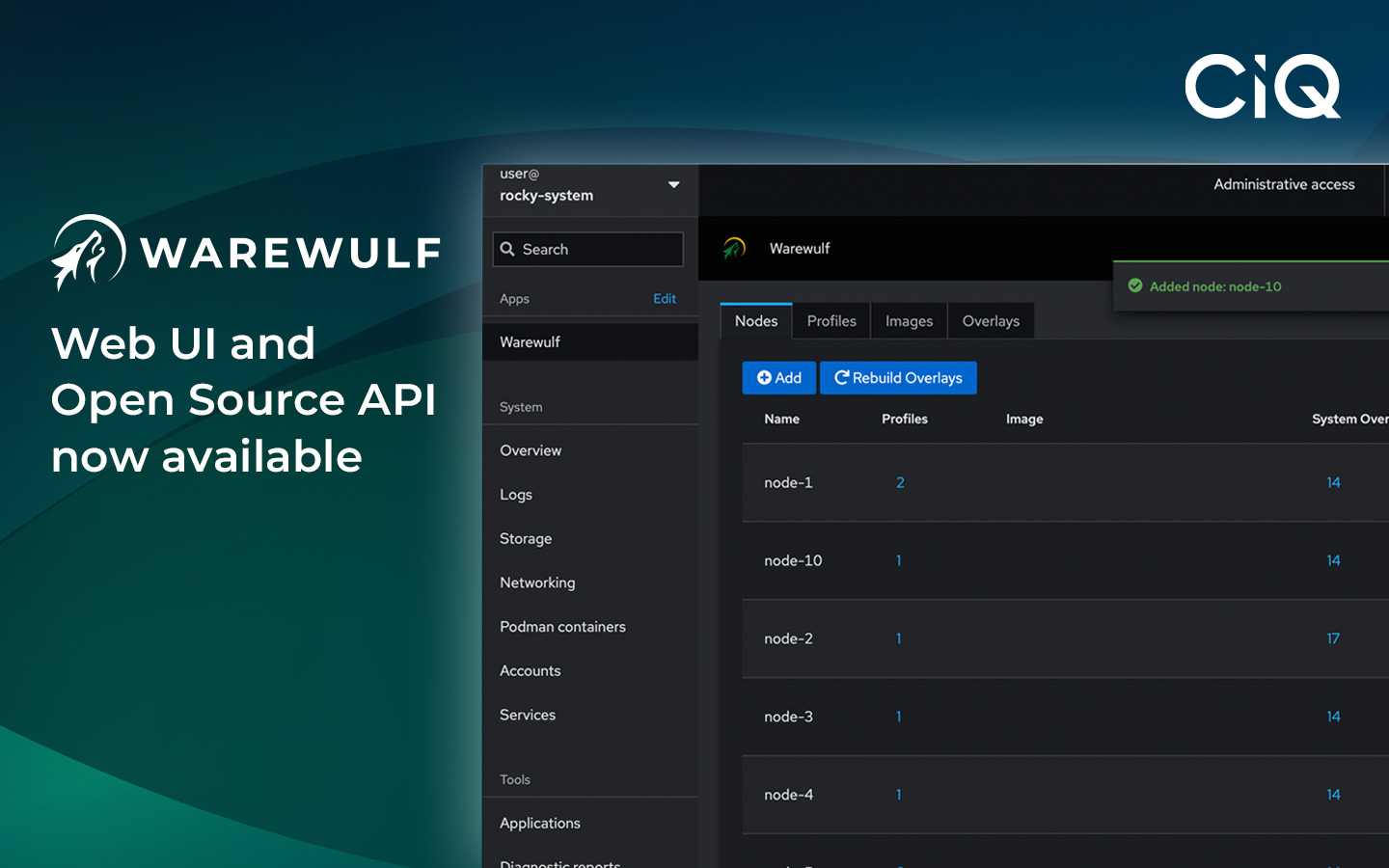

Warewulf Pro's integrated approach

Warewulf Pro provides a purpose-built path to integrated Apptainer deployment. Apptainer comes pre-installed in Warewulf-managed node images, eliminating manual provisioning steps that create inconsistencies across a cluster. Container-aware provisioning ensures that as nodes are added or replaced, they come online immediately with full Apptainer support and consistent, centrally managed configuration.

For HPC teams evaluating cluster management platforms, this integration removes significant operational friction. There is no separate Apptainer deployment project, no per-node configuration drift, and no version mismatches between nodes. The result is a cluster where containerized workflows are first-class citizens from day one: researchers get portable, reproducible containers, and administrators get the software management simplicity that containerization promises.

Ready to see this in action? Request a demo to see Apptainer integration with Warewulf Pro.

Built for scale. Chosen by the world’s best.

2.75M+

Rocky Linux instances

Being used world wide

90%

Of fortune 100 companies

Use CIQ supported technologies

250k

Avg. monthly downloads

Rocky Linux