Configuring software RAID in Warewulf nodes for high-performance computing

Contributors

Arian Cabrera

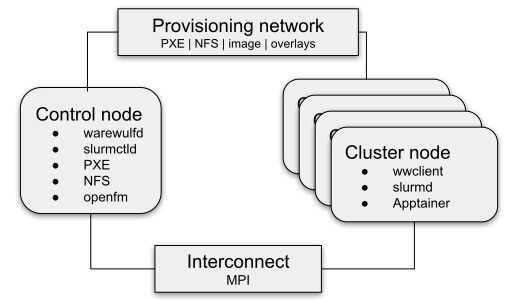

Five drives installed on a Warewulf compute node and left idle represent wasted capability for every job. This guide shows how to configure Warewulf to assemble them into a software RAID (Redundant Array of Independent Disks) array using mdadm and Ignition, automating the full provisioning process so every node boots with a healthy, mounted array, no manual per-node work required. The setup uses two profiles to separate responsibilities: one runs on every boot, the other only when you need to wipe and rebuild.

Warewulf is an open source node provisioning project; CIQ actively maintains it with the community and offers Warewulf Pro for production environments that need enterprise support.

What you need before you start

This guide assumes that a Warewulf server is successfully installed and nodes are able to boot from a Rocky Linux 9 or Rocky Linux 10 image.

Ignition, which handles disk partitioning and RAID assembly at boot time, is not available for Rocky Linux 8. This guide requires Rocky Linux 9 or later, as Ignition is available in the Rocky Linux 9 AppStream repository.

Two-stage booting with dracut is also required, and you should be running Warewulf 4.6.5 or later before following these steps.

Install mdadm and Ignition in your Rocky Linux image

Prepare the image

The cleanest way to manage Warewulf images is with a Containerfile. It keeps your image configuration version-controlled, reproducible, and easy to update when new package versions ship, no more manually shelling into images and forgetting what you changed three months ago. Add the following to your Containerfile to install ignition, mdadm, the Warewulf Dracut module, and rebuild the initramfs:

FROM ghcr.io/warewulf/warewulf-rockylinux:9

# Warewulf version to install

ARG WAREWULF_VERSION=4.6.5

# Install ignition, mdadm, and the Warewulf Dracut module

RUN dnf update -y \

&& dnf install -y --allowerasing \

ignition \

mdadm \

&& dnf install -y https://github.com/warewulf/warewulf/releases/download/v${WAREWULF_VERSION}/warewulf-dracut-${WAREWULF_VERSION}-1.el9.noarch.rpm \

&& dnf clean all

#Rebuild the initramfs with the wwinit, ignition, and mdraid Dracut modules

RUN dracut --force --no-hostonly \

--add wwinit \

--add ignition \

--add mdraid \

--regenerate-all

The mdraid module ensures the RAID array is recognized during the initramfs boot phase. The ignition module runs disk partitioning and RAID assembly. The wwinit module is Warewulf's init stage that coordinates the full boot sequence.

Once the Containerfile is ready, build and import the image into Warewulf using a container tool of your choice. The following example uses Podman.

# sudo podman build . --file Containerfile --tag rl-demo

# sudo wwctl image import $(podman image mount localhost/rl-demo) rl-demo

# sudo podman image unmount localhost/rl-demo

# sudo wwctl image build rl-demo

Prefer working directly on an existing image? You have two options. If you want an interactive session, shell in with wwctl image shell:

# wwctl image shell rockylinux-9

Image build will be skipped if the shell ends with a non-zero exit code.

[warewulf:rockylinux-9] /# dnf install -y ignition mdadm

[warewulf:rockylinux-9] /# dnf install -y https://github.com/warewulf/warewulf/releases/download/v4.6.5/warewulf-dracut-4.6.5-1.el$(rpm -E %"{rhel}").noarch.rpm

[warewulf:rockylinux-9] /# dracut --force --no-hostonly --add wwinit --add ignition --add mdraid --regenerate-all

[warewulf:rockylinux-9] /# exit

Or if you would rather run the commands non-interactively, wwctl image exec is a great option. Use --build=false to suppress the automatic rebuild between commands and trigger it yourself once at the end:

[root@warewulf ~]# wwctl image exec --build=false rockylinux-9 -- dnf install -y ignition mdadm

[root@warewulf ~]# wwctl image exec --build=false rockylinux-9 -- dnf install -y https://github.com/warewulf/warewulf/releases/download/v4.6.5/warewulf-dracut-4.6.5-1.el9.noarch.rpm

[root@warewulf ~]# wwctl image exec rockylinux-9 -- dracut --force --no-hostonly --add wwinit --add ignition --add mdraid --regenerate-all

If you used wwctl image shell and the shell exited with a non-zero exit code, the automatic rebuild will have been skipped. In that case, trigger it manually by running:

[root@warewulf ~]# wwctl image build rockylinux-9

Build the overlay that generates mdadm.conf from node resources

The mdadm overlay will generate /etc/mdadm.conf on each node from the RAID configuration stored in that node's resources.

Create the overlay:

# wwctl overlay create mdadm

Edit the mdadm.conf template:

# wwctl overlay edit mdadm -p /etc/mdadm.conf.ww

Clear the file and replace its contents with the following:

{{- if index .Resources "mdadm" }}

# Autogenerated by warewulf

DEVICE partitions

{{- range $raid := index .Resources "mdadm" }}

ARRAY /dev/md/{{ $raid.name }} level={{ $raid.level }} num-devices={{ len $raid.devices }} devices={{ $raid.devices | join "," }}

{{- end }}

{{- else }}

{{ abort }}

{{- end }}

This template checks whether an mdadm resource is defined on the node or profile. If it is, it generates an ARRAY line in mdadm.conf for each defined RAID array.

If no mdadm resource is present, abort prevents the overlay from being applied. Nodes without RAID configuration are completely unaffected.

Set up the per-boot RAID assembly and mount profile

This profile adds the mdadm overlay and defines the RAID array metadata and /etc/fstab mount entry. It runs on every boot to ensure the array is configured and /scratch is mounted.

Add the profile:

# wwctl profile add raid

Edit the profile:

# wwctl profile edit raid

Navigate to the bottom of the file and replace raid: {} with the following:

raid:

system overlay:

- mdadm

resources:

fstab:

- spec: /dev/md/md0

file: /scratch

vfstype: ext4

mntops: defaults

mdadm:

- name: md0

level: raid0

devices:

- /dev/disk/by-partlabel/sda1

- /dev/disk/by-partlabel/sdb1

- /dev/disk/by-partlabel/sdc1

- /dev/disk/by-partlabel/sdd1

- /dev/disk/by-partlabel/sde1

The fstab resource populates /etc/fstab via Warewulf's built-in fstab overlay. Ensure fstab is present in your system overlays (it is included by default). The mdadm resource is the structured data your mdadm.conf.ww template iterates over to produce mdadm.conf.

NOTE: Adjust level: raid0 to your desired RAID level (raid5, raid6, raid10, etc.) based on your redundancy and performance requirements. For NVMe drives, replace /dev/sdX with the appropriate /dev/nvmeXnY paths throughout this guide.

Warewulf is an open source project. Warewulf Pro, CIQ's commercially supported version, adds enterprise-grade support, long-term maintenance, and priority bug fixes for teams that can't afford provisioning downtime.

Create the provisioning profile that wipes and rebuilds drives on demand

This profile holds the Ignition configuration that wipes drives, creates partitions, assembles the RAID array, and formats the filesystem. It is designed to be applied only when you want to provision (or re-provision) the array.

# wwctl profile add raid-provision

Edit the profile:

# wwctl profile edit raid-provision

Navigate to the bottom of the file and replace raid-provision: {} with the following:

raid-provision:

system overlay:

- ignition

resources:

ignition:

storage:

disks:

- device: /dev/sda

partitions:

- label: sda1

number: 1

sizeMiB: 0

typeGuid: A19D880F-05FC-4D3B-A006-743F0F84911E

wipeTable: true

- device: /dev/sdb

partitions:

- label: sdb1

number: 1

sizeMiB: 0

typeGuid: A19D880F-05FC-4D3B-A006-743F0F84911E

wipeTable: true

- device: /dev/sdc

partitions:

- label: sdc1

number: 1

sizeMiB: 0

typeGuid: A19D880F-05FC-4D3B-A006-743F0F84911E

wipeTable: true

- device: /dev/sdd

partitions:

- label: sdd1

number: 1

sizeMiB: 0

typeGuid: A19D880F-05FC-4D3B-A006-743F0F84911E

wipeTable: true

- device: /dev/sde

partitions:

- label: sde1

number: 1

sizeMiB: 0

typeGuid: A19D880F-05FC-4D3B-A006-743F0F84911E

wipeTable: true

filesystems:

- device: /dev/md/md0

format: ext4

path: /scratch

wipeFilesystem: true

raid:

- devices:

- /dev/disk/by-partlabel/sda1

- /dev/disk/by-partlabel/sdb1

- /dev/disk/by-partlabel/sdc1

- /dev/disk/by-partlabel/sdd1

- /dev/disk/by-partlabel/sde1

level: raid0

name: md0

During the Dracut boot stage, Ignition reads this configuration and performs three operations in order: it wipes each drive's partition table and creates a single partition spanning the full disk (sizeMiB: 0 means use all remaining space); it assembles those partitions into the named RAID array; and it formats the RAID device as ext4 at /scratch. The typeGuid value A19D880F-05FC-4D3B-A006-743F0F84911E marks the partitions as Linux RAID type.

The ignition overlay translates this YAML resource into the JSON configuration consumed by the Ignition binary.

WARNING: wipeTable: true and wipeFilesystem: true will destroy all data on the target drives. Do not apply the raid-provision profile to a node unless you intend to re-provision its disks.

Apply both profiles and trigger the first provisioning boot

Apply both profiles to the node, along with any other profiles it needs:

# wwctl node set n1 -P default,raid,raid-provision

Rebuild the overlays:

# wwctl overlay build

Reboot the node. On this first boot, Ignition will run during the Dracut stage to partition the drives, assemble the RAID, and format /scratch. The mdadm overlay will populate mdadm.conf, and the fstab overlay will ensure /scratch is mounted in the running system.

Confirm the array is healthy after the first boot

After the node comes back online, SSH in and confirm the array is healthy:

# mdadm --detail /dev/md/md0

/dev/md/md0:

Version : 1.2

Creation Time : Wed Feb 25 22:21:12 2026

Raid Level : raid0

Array Size : 62860800 (59.95 GiB 64.37 GB)

Raid Devices : 5

Total Devices : 5

Persistence : Superblock is persistent

Update Time : Wed Feb 25 22:21:12 2026

State : clean

Active Devices : 5

Working Devices : 5

Failed Devices : 0

Spare Devices : 0

Layout : original

Chunk Size : 512K

Consistency Policy : none

Name : any:md0

UUID : 7c013665:5180e8aa:59aa2ec1:d5f0afb7

Events : 0

Number Major Minor RaidDevice State

0 8 1 0 active sync /dev/sda1

1 8 17 1 active sync /dev/sdb1

2 8 33 2 active sync /dev/sdc1

3 8 49 3 active sync /dev/sdd1

4 8 65 4 active sync /dev/sde1

All five devices State: clean and active confirms the RAID is assembled and healthy.

Control whether scratch data survives reboots

With raid-provision in the node's profile, every reboot wipes and re-provisions the array. This is useful when scratch storage should be clean between jobs. To retain data across reboots, remove the provisioning profile:

wwctl node set n1 -P default,raid

Rebuild the overlays:

# wwctl overlay build

With raid-provision removed, mdadm reassembles the existing array from its superblocks on every boot. Data persists across reboots without any configuration changes.

On subsequent boots, mdadm reassembles the existing array from its superblocks, and the fstab entry mounts it without any data loss.

When you need to re-provision, for example, after a drive swap or at the start of a new project, add the profile back:

# wwctl node set n1 -P default,raid,raid-provision

Rebuild the overlays:

# wwctl overlay build

Reboot the node, and the drives will be wiped and rebuilt cleanly.

Troubleshooting

Ignition does not run on first boot

Ignition requires two-stage booting via dracut. If Ignition does not run, confirm that two-stage boot is enabled in your Warewulf node configuration and that the dracut initramfs was rebuilt with all three modules (wwinit, ignition, mdraid). Re-run the dracut command from the image shell and rebuild the image before rebooting the node. You can also verify /warewulf/ignition.json was generated on the node and check the service logs: journalctl -u ww-ignition.service.

Ignition fails on the first boot but succeeds on the second boot

This is a known issue when a disk has an unreadable or corrupted partition table. Ignition uses sgdisk --zap-all internally to wipe the partition table before creating new partitions. On disks with an unreadable partition table, sgdisk exits with code 2, which Ignition versions earlier than 2.16.2 treat as a fatal error, causing Ignition to abort without creating partitions or filesystems. However, the partition table is still wiped in the process, so the configuration succeeds cleanly on the next reboot. If a node fails to come up on first boot but recovers on the second boot with the array intact, this is the likely cause. No action is required beyond the additional reboot.

mdadm.conf is not generated on the node

The mdadm overlay uses abort to skip nodes that have no mdadm resource defined. If mdadm.conf is not appearing, verify that the resource key in your profile is spelled exactly mdadm and that the raid profile is applied to the node. You can inspect the rendered overlay output with wwctl overlay show -r <node> mdadm /etc/mdadm.conf

The RAID array does not assemble after a subsequent reboot

If the array fails to assemble on reboots after the initial provisioning, confirm that the mdraid Dracut module is present in the initramfs (lsinitrd | grep mdraid) and that mdadm.conf on the node contains the correct ARRAY line. Run mdadm --assemble --scan manually to test assembly outside of the boot sequence.

Drives were accidentally wiped on a node that should have retained data

This happens when the raid-provision profile is left attached after the initial provisioning boot. After confirming the array is healthy on first boot, immediately remove the profile with wwctl node set <node> -P default,raid and rebuild overlays. If a node reboots unexpectedly with the provisioning profile still attached, the array will be wiped; always remove raid-provision as the final step of initial setup.

Need enterprise support for Warewulf: Learn about Warewulf Pro →

Want to go deeper on Warewulf provisioning: Warewulf disk provisioning documentation →

Exploring CIQ's HPC stack: See the CIQ HPC Stack →

References

Built for scale. Chosen by the world’s best.

2.75M+

Rocky Linux instances

Being used world wide

90%

Of fortune 100 companies

Use CIQ supported technologies

250k

Avg. monthly downloads

Rocky Linux